Some of our most popular and enduring engineering stories show how we use GitLab technology to take small steps to achieve major upgrades, fixes, and integrations to improve upon GitLab features. These stories demonstrate one of our core values at GitLab, iteration – meaning we ship the smallest changes first. When it comes to building new features or introducing fixes at GitLab, our engineering team operates under the principle that incremental change drives the greatest value.

How we executed on milestone migrations

Azure to GCP

Azure simply was not cutting it for hosting GitLab.com, and we decided it was time to migrate GitLab over to Google Cloud Platform (GCP). This was no small decision or endeavor, and we documented our end-to-end process publicly in the hopes that other companies might learn from our experience. Read the blog post describing the migration to GCP, or watch the video below to learn more about this major migration.

Next, we explain how we analyzed data to see how GitLab.com was performing on GCP after this major migration. Turns out, GitLab.com availability improved by 61% post-migration.

Upgrading PostgreSQL

In another blog post, one of GitLab.com’s main PostgreSQL clusters needed a major version upgrade. We knew it wouldn’t be easy, but in May 2020, we pulled off a near-perfect execution of this substantial upgrade. We explain how the process unfolded, from planning to testing to full automation.

Moving to Kubernetes

Migrating GitLab.com over to Kubernetes was a painstaking and complex process. In one of our most popular blog posts last year, we share the trials and triumphs from the year after the migration.

Code detectives show their debugging work

GitLab engineering fellow Stan Hu explains how debugging a bug in the Docker client library that was used in the GitLab runner taught him more about Docker, Golang, and even GitLab.

Back in 2018, a customer flagged a bug in the NFS that the Support team escalated to Stan and his fellow engineers. It took two weeks to hunt down the NFS bug that was disrupting the Linux kernel, and Stan chronicles the intricacies of his investigation in this blog post.

After GitLab.com users reported getting the same, mysterious error message, our Scalability team rolled up their sleeves to figure out the origins of the message – and uncovered a complex problem.

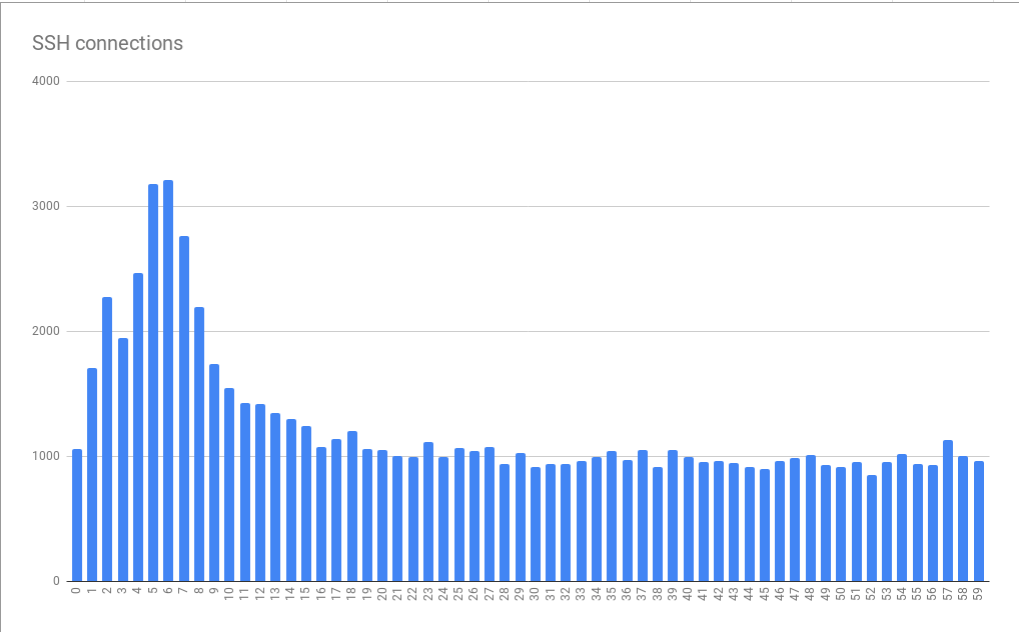

Graph showing connection errors, grouped by second-of-the-minute, indicates a lot of clustering going on in the time dimension.

Graph showing connection errors, grouped by second-of-the-minute, indicates a lot of clustering going on in the time dimension.

There were six key lessons we learned while debugging this scaling problem on GitLab.com.

Using data for anomaly detection

Two years ago we switched over from our legacy NFS file-sharing service to Gitaly, and soon we noticed that our Gitaly service was lagging.

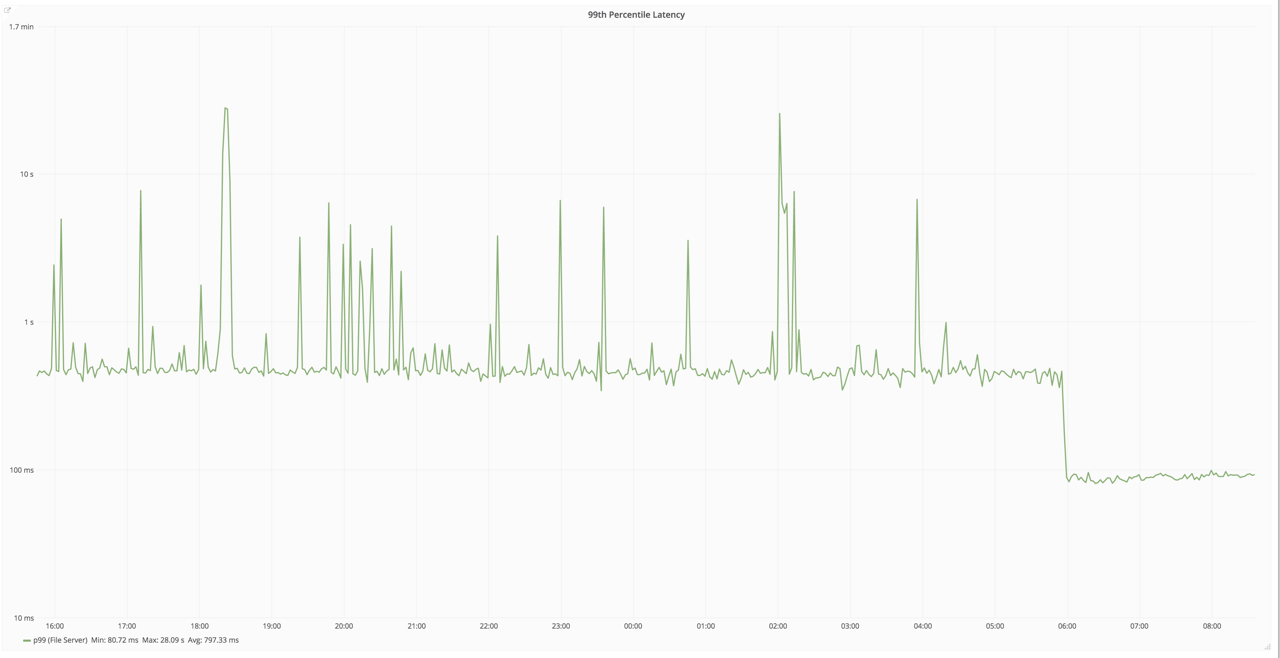

We noticed that the 99th percentile performance of the gRPC endpoint for Gitaly service had dropped from 400ms down to 100ms for an unknown reason.

We noticed that the 99th percentile performance of the gRPC endpoint for Gitaly service had dropped from 400ms down to 100ms for an unknown reason.

Through solid application monitoring, we were able to identify the problem and quickly fix it. Unpack the process behind the Gitaly fix in this popular blog post.

Prometheus reports on time-series data, which can be used for anomaly detection and alerting. Learn how you can use this data to set up analysis and alerting with Prometheus and use the code snippets to try it out in your own system.

Inside GitLab

When GitLab co-founder Dmitriy Zaporozhets built GitLab on Ruby on Rails, despite working mostly in PHP at the time. In this foundational blog post, our GitLab CEO, Sid Sijbrandij, explains why building on rails was the best decision for GitLab.

We built our Web IDE to make it easier to edit code using GitLab. Explore how we took the GitLab Web IDE from an experiment to working feature.

The extensions and integrations that power us

How we built a VS Code extension

After a survey revealed that VS Code was the most-used tool by our Frontend team, we decided to build a VS Code extension that works with GitLab. Learn how we built the VS Code extension in a series of iterations.

Soon, we found out our VS Code extension was very popular. So we wrote a blog post explaining how users can develop their own extensions with VS Code and GitLab.

Challenges with Elasticsearch

Elasticsearch enables global code search on GitLab.com and would allow us to run advanced syntax search and advanced global search of our codebase. But we ran into trouble with GitLab’s integration with Elasticsearch and hit some dead ends on our first attempt to initiate the integration. We recalibrated, learned from our mistakes, and made a second attempt at the integration a few months later.

Dogfooding at GitLab

The engineering productivity team at GitLab built Insights to examine trends in the GitLab.com issue tracker at a high-level, but soon realized Insights could be useful to our GitLab Ultimate users. Watch the video below or read the blog post to explore the origins of Insights.

How we reimagined the technical interview

The trouble with technical interviews is that they rarely reflect the job you’re interviewing for. Learn how former GitLab team member, Clement Ho, reimagined the technical interview for Frontend engineers.

How troubleshooting and security modeling can prevent disaster

In a major feat of coordination, our globally distributed engineering team managed to work synchronously to troubleshoot an issue with our Hashicorp Consul, successfully avoiding any significant problems, including the outage we anticipated. Read "The consul outage that never happened" to learn how they did it.

Our Red team at GitLab is continually searching for vulnerabilities, big and small, and introduces patches to make it function. In one of our most popular 2020 posts, our security team explains how an attacker who already gained unauthorized access to the cloud platform might be able to take advantage of GCP privileges, and how replicating this breach scenario could help you prevent this from happening on your GCP instance.

Did we miss something? Share a link to your favorite GitLab engineering story below and check out our round-up of some of our top stories about how to apply GitLab technology.